Artificially-managed chatbots have become increasingly popular in recent years due to their ability to generate human-like text (think of the pop-up boxes you may see when shopping on a website). This week, the Internet is ablaze with the newest of these, OpenAI’s ChatGPT. However, this sort of AI bot’s reliance on massive amounts of data has raised concerns about their potential to perpetuate and amplify existing biases.

One of the most notable biases found in large language models – defined as “artificial intelligence tools that can read, summarize and translate texts and predict future words in a sentence letting them generate sentences similar to how humans talk and write” – is their tendency to reflect a liberal or progressive worldview. This is because the vast majority of the data used to train these models comes from the Internet, which is dominated by content from the younger generations in the West.

As a result, large language models often exhibit biases towards collectivism, egalitarianism, and socialism, as well as negative attitudes towards individualism, meritocracy, and capitalism. This bias is found even in discussions regarding biology.

As Sean Davis, CEO of the Federalist, found, ChatGPT can’t answer the question “What is a woman” consistently.

From The Federalist:

Federalist CEO Sean Davis asked the chatbot the simple question that’s become a litmus test for detecting transgender ideologues: “What is a woman?”

“A woman is an adult female human being,” the computer said.

“Is Rachel Levine, who is a federal official at the U.S. Department of Health and Human Services, a woman?” Davis asked in a follow-up question.

“Yes, Rachel Levine is a woman,” said the computer.

When Davis sought to rectify the contradictory statements, the artificial journalist suffered a breakdown not too different from the reactions captured by Matt Walsh in his documentary, “What Is a Woman?

When I asked about the existing number of genders, the chatbot gave the response of a gender studies professor on a modern-day college campus.

“The concept of gender is complex and can be difficult to define, as it can refer to a person’s biological sex, their gender identity, or their gender expression,” wrote the computer. “Because of this complexity, it’s difficult to say exactly how many genders there are.”

“Ultimately, the number of genders is a matter of personal belief and individual interpretation,” the computer added.

It is notable – and troubling – that artificial intelligence tools which are increasingly becoming a part of daily life are so inherently biased and factually incorrect.

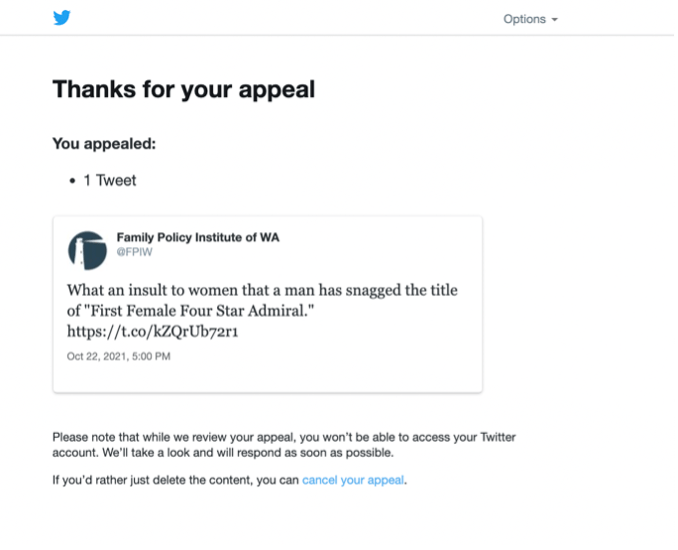

Our team at Family Policy Institute of Washington (FPIW), in fact, remains unable to access our official Twitter account until we remove a tweet that points out that a man is supposedly the first “female” Four Star Admiral.

There’s a decent degree of likelihood that it was some kind of automated technology that flagged our tweet for removal, raising further concerns about the use of technology to regulate speech.

We appealed the ruling, but our account says the appeal is still “under review.”

With the new leadership at Twitter under Elon Musk, we are hopeful that our suspension may be lifted, in time, as others who made a similar point are starting to be unbanned.

When it comes to Big Tech companies, monitoring their level of subservience to political correctness is vital. Though people understandably fascinated by the amazing potential of artificial intelligence, its proclivity to assuming liberal bias – and factually incorrect information – pushes us closer to the Orwellian world 1984 warned us about.

It will be important, in an age where even the idea of truth is under constant assault, to monitor what these artificial intelligence assistants are telling us – particularly our children.

If the bias can be kept under control, Large Language Models like ChatGPT could unlock a world of potential, but if left unchecked, it’s a potential vehicle for spreading dangerous woke ideology in such a subtle way that some people won’t even realize they’re being lied to.